Sparse Video Understanding

Currently it is common to find places being monitored by a considerable number of video cameras, such as in stores, parking lots, and train stations, not to mention sports and other TV broadcasted events. Multiple sensors are also required to monitor complex large environments, due to the limited field of view of a single camera and to avoid blind spots due to occlusion.

In typical large surveillance applications though, the existence of multiple video streams being displayed in separate monitors might be overwhelming for human operators because, while a single monitor produces useful information that is easy to understand, with multiple monitors, it is difficult for humans to integrate multiple views to create a better global comprehension of events. For example, a simple task of tracking a moving object from monitor to monitor might become a complex task since there might be several moving objects, and the operator might not know what monitor to look next once the object leaves the field of view of a camera.

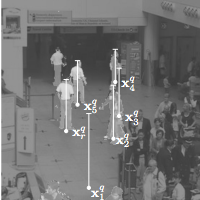

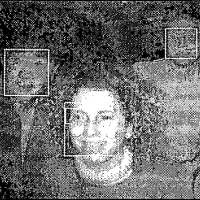

The integration of multiple videos onto a 3D scene model in an augmented virtual environment (AVE) has been shown to be an effective tool for coping with multiple cameras monitoring complex scenes [1–4]. The 3D visualization provides users with a natural browsing and multi-resolution view of the spatial and temporal data provided by the multiple cameras.

Papers